Hey you, cyberpunk wunkderkind able to shift all of the paradigms and escape of each field you could find. Do you need to run a super-powerful, mind-boggling synthetic intelligence proper by yourself laptop? Nicely you possibly can, and also you’ve been in a position to for some time. However now Nvidia is making it tremendous straightforward, barely an inconvenience to take action, with a preconfigured generative textual content AI that runs off its consumer-grade graphics playing cards. It’s referred to as “Chat with RTX,” and it’s out there as a beta proper now.

Chat with RTX is solely text-based, and it comes “skilled” on a big database of public textual content paperwork owned by Nvidia itself. In its uncooked kind the mannequin can “write” properly sufficient, however its precise data seems to be extraordinarily restricted. For instance, it can provide you an in depth breakdown of what a CUDA core is, however after I requested, “What’s a baraccuda?” it answered with, “It seems to be a sort of fish” and cited a Cyberpunk 2077 driver replace as a reference. It might simply give me a seven-verse limerick about a gorgeous printed circuit board (not an particularly good one, thoughts you, however one which fulfilled the immediate), however couldn’t even try to inform me who received the Warfare of 1812. If nothing else, it’s an instance of how deeply giant language fashions rely upon an enormous array of knowledge enter with a view to be helpful.

Michael Crider/Foundry

To that finish, you possibly can manually lengthen Chat with RTX’s capabilities by pointing it to a folder filled with .txt, .pdf, and .doc recordsdata to “study” from. This may be a bit extra helpful if it’s essential to search by way of gigabytes of textual content and also you want context on the similar time. Shockingly, as somebody whose total work output is on-line, I don’t have a lot in the way in which of native textual content recordsdata for it to crawl.

Pictured: My graphics card studying the Bible. Onerous.

Michael Crider/Foundry

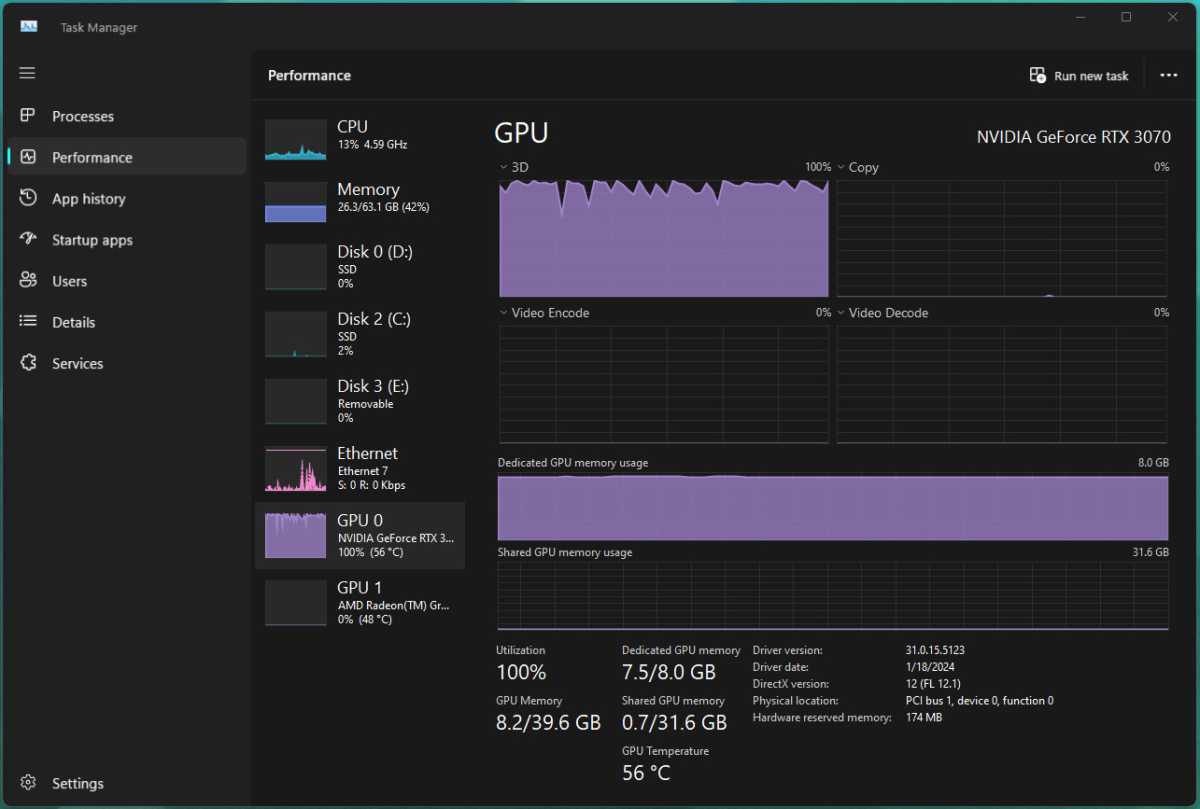

To check out this doubtlessly extra helpful functionality, I downloaded publicly out there textual content recordsdata of assorted translations of the Bible, hoping to provide Chat with RTX some quizzes that my outdated Sunday College academics would in all probability be capable of nail. However after an hour the software was nonetheless churning by way of lower than 300MB of textual content recordsdata and operating my RTX 3070 at practically 100%, endlessly, so extra qualitative analysis should wait for an additional day.

With a view to run the beta, you’ll want Home windows 10 or 11 and an RTX 30- or 40-series GPU with not less than 8GB of VRAM. It’s additionally a reasonably huge 35GB obtain for the AI program and its database of default coaching supplies, and Nvidia’s file server appears to be getting hit exhausting in the meanwhile, so simply getting this factor up in your PC may be an train in persistence.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25332833/STK051_TIKTOKBAN_CVirginia_A.jpg)